Cloudflare's R2 Outage, Again - Credential Rotation Gone Wrong

On 21 March 2025, Cloudflare's R2 object storage service went down for over an hour. Every single write operation failed globally, and roughly 35% of reads followed suit. The knock-on effects hit Cache Reserve, Images, Stream, Log Delivery, and Vectorize. If your application relied on R2 that evening, it was broken.

If this sounds familiar, it should. Just six weeks earlier, R2 suffered a complete 59-minute outage when an operator accidentally disabled the entire Gateway service during routine abuse remediation. We wrote about that incident at the time, covering the cascade effect, the architectural lessons, and the importance of building resilience into your own infrastructure. Those points all still stand - but this second incident adds a new and arguably more concerning dimension.

What Actually Happened

Cloudflare's R2 Gateway Worker authenticates with the storage backend using a credential pair - an ID and a secret key. Like any responsible engineering team, they rotate these credentials regularly. The process is straightforward: generate new credentials, deploy them to the production gateway, then retire the old ones.

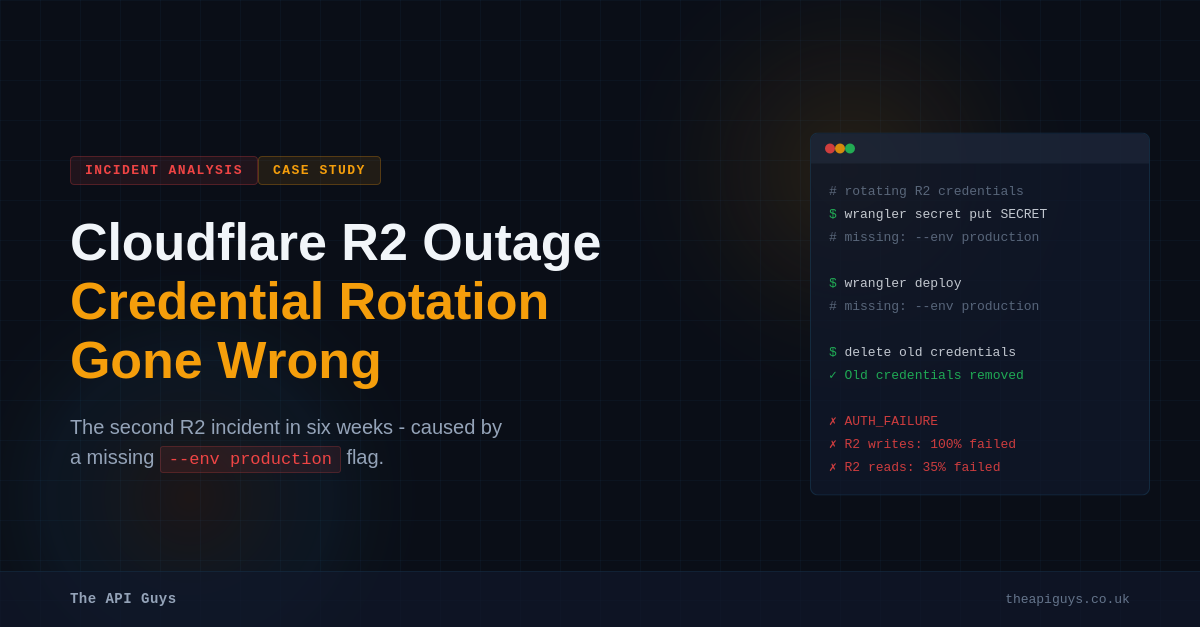

The problem on 21 March was a single missing CLI flag. The R2 engineering team used Cloudflare's own wrangler tool to deploy the fresh credentials. They ran wrangler secret put and wrangler deploy to push the new key pair - but they omitted --env production from both commands.

Without that flag, wrangler defaults to the default environment. In this case, that meant the development instance. The new credentials landed on a Worker that nobody was using in production. The team, believing the rotation was complete, then deleted the old credentials from the storage backend.

At that point, the production R2 Gateway was still using the old credentials - credentials that no longer existed. Authentication failed, writes stopped entirely, and a significant portion of reads broke with them.

The Timeline Tells a Story

The credential rotation began at 19:49 UTC. By 20:19, the team had deployed the new credentials - to the wrong environment. At 20:37, they deleted the old credentials, confident the switchover was done. Impact did not begin until 21:38, almost an hour later, because the deletion propagated gradually through the storage infrastructure.

It took until 22:36 - nearly an hour into the outage - for the team to discover that the credentials had been deployed to the wrong Worker. They found this by reviewing the production Worker's release history. By 22:45, the correct deployment was in place and service recovered.

What made diagnosis harder was that Cloudflare's own tooling did not surface which credentials the production gateway was actually using. The team had no straightforward way to verify that the rotation had landed in the right place before deleting the old keys.

Why This One Is More Concerning

The February outage was caused by a gap in the abuse remediation tooling - a missing safeguard that allowed a broad destructive action where only a targeted one should have been possible. That is a discrete, fixable problem, and Cloudflare pledged to address it with guardrails, restricted permissions, and two-party approval for critical actions.

This March incident is different. It was not an edge case in an admin tool. It was a core operational process - credential rotation - performed by the R2 engineering team themselves, using Cloudflare's own CLI tooling. The failure mode was not exotic: a missing flag on a command that defaults to the wrong environment. And critically, there was no verification step between deploying the new credentials and destroying the old ones.

What makes this more concerning is the pattern it reveals. Both outages were caused by manual processes with insufficient validation acting on a service that underpins a significant portion of Cloudflare's product suite. The February incident should have triggered a broad review of manual operational procedures touching R2. Instead, six weeks later, a different manual process with the same class of weakness took the same service down again.

The Deployment Automation Gap

This incident is fundamentally a case study in what happens when critical operational tasks sit outside your deployment pipeline. Cloudflare's regular code deployments presumably go through CI/CD with environment targeting, testing, and validation baked in. But the credential rotation was done by a human typing wrangler commands, relying on memory to include the correct flags.

This is a gap we see in organisations of all sizes. The application deployment is automated and well-tested, but the operational tasks around it - credential rotation, DNS changes, certificate renewals, database migrations - are still handled through manual runbooks or ad-hoc CLI commands. These tasks are often less frequent, which means they get less automation investment, but they frequently carry higher blast radius when they go wrong.

The lesson is clear: if a process can take down production, it should be automated through the same pipeline that handles your deployments, with the same safeguards.

Practical Takeaways

In our February write-up, we focused on architectural resilience - diversifying storage providers, building for graceful degradation, keeping recovery tools independent of the systems they recover. All of that advice remains relevant and we would encourage you to read it if you have not already.

This incident adds a complementary set of lessons, focused specifically on how you manage operational processes.

Automate credential rotation through your deployment pipeline. If a human is typing CLI commands to rotate production credentials, the process is fragile. The rotation should flow through the same tooling that handles your other deployments, with environment targeting enforced by the pipeline rather than by memory.

Verify before you destroy. Never delete old credentials until you have confirmed - programmatically, not by assumption - that the new ones are in active use in production. A simple health check or log verification step would have caught this issue before it caused any impact.

Challenge your tooling defaults. If your CLI defaults to a development or staging environment when no flag is provided, that is a footgun waiting to go off. Consider whether your default should be the safest option rather than the most convenient one. Better yet, require explicit environment selection for any production-touching operation and reject commands that omit it.

Add logging that confirms credential identity. Cloudflare acknowledged they lacked visibility into which credentials the production gateway was actually using. If your services authenticate with external backends, make sure your logs include enough information to verify which credentials are active. A truncated key suffix in your logs is enough to confirm you are using what you think you are using.

Treat operational runbooks as code. If your credential rotation process lives in a wiki page or a shared document, it is already out of date. Encode it as a script, a CI/CD job, or a custom Artisan command that enforces every step - including the verification step that was missing here.

For Laravel Teams Specifically

If you are managing Laravel applications, think about how you handle credential rotation today. Are your API keys and database credentials rotated through your CI/CD pipeline, or does someone SSH into the server and edit the .env file? Do you have a way to verify that the new credentials are active before the old ones are revoked?

Consider using environment-specific configuration managed through your deployment tooling rather than manual file edits. Tools like Laravel Envoy or custom Artisan commands can wrap credential rotation in a repeatable, testable process. If you use Laravel Forge, its environment management can be driven through the API as part of an automated pipeline rather than through the dashboard manually.

The cost of automating these processes is almost always less than the cost of a single outage. Cloudflare learned that lesson twice in six weeks. You do not have to.

What Cloudflare Is Doing About It

Cloudflare's post-mortem was thorough and public, which deserves credit. They have committed to adding credential identity logging, requiring explicit confirmation before old keys are retired, routing key rotations through their hotfix release tooling instead of manual CLI commands, and enforcing two-person validation for the process. They are also building automated health checks that test new keys before the old ones are removed.

These are solid steps for the credential rotation process specifically. The broader question is whether these improvements will drive a systematic review of all manual operational procedures touching critical infrastructure, or whether the next outage will come from yet another under-automated process that nobody thought to audit. Two R2 outages in six weeks, both caused by manual processes with insufficient guardrails, suggest the problem is systemic rather than specific.

If you are concerned about the resilience of your infrastructure or want help automating your operational processes, get in touch with us. Whether it is credential management, deployment pipelines, or architectural review, we can help you build the guardrails before you need them.