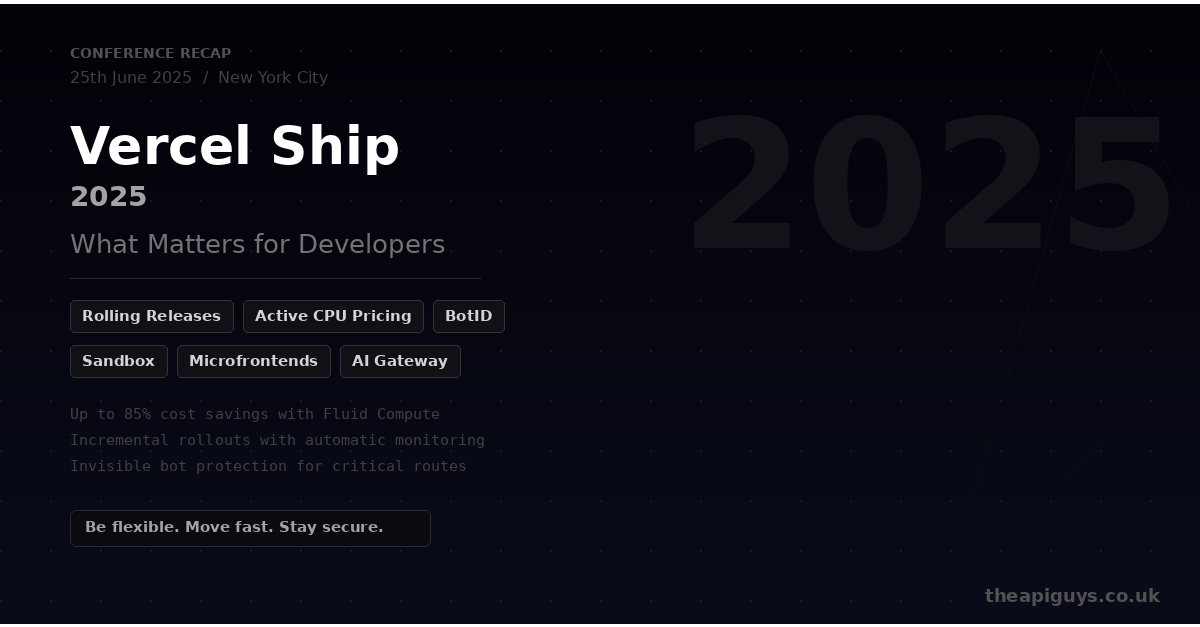

Vercel Ship 2025 - What Matters for Developers

Vercel Ship 2025 took place on 25th June in New York City, and it was packed with announcements. The overarching theme was clear: Vercel is positioning itself as more than a frontend deployment platform. The push towards what they are calling the "AI Cloud" brings new compute primitives, smarter pricing, security tooling, and deployment workflows that are worth paying attention to, whether or not you are building AI-powered applications.

Here is our breakdown of the announcements that matter most for development teams.

Rolling Releases

This is arguably the most practically useful announcement from the event. Rolling Releases allow you to incrementally roll out new deployments to a percentage of your users, with built-in monitoring that can pause or abort the rollout if things go wrong.

If metrics like Time to First Byte degrade or error rates spike, the rollout stops automatically. You configure rollout stages per project and decide how each stage progresses. Updates propagate globally in under 300 milliseconds, and you can control everything through the dashboard, CLI, REST API, or Terraform.

For teams shipping frequently, this is a significant safety net. It is the kind of feature that removes the anxiety from deploying to production without slowing you down. Rolling Releases are now generally available.

Fluid Compute and Active CPU Pricing

Vercel's Fluid Compute has been around for a while, but the new Active CPU pricing model changes the economics significantly. Traditional serverless billing charges for wall time, the entire duration your function is running, even if it is sitting idle waiting for an API response or database query.

Active CPU pricing splits the bill into three parts: Active CPU time charges for when your code is actually executing, Provisioned Memory covers idle wait time at a fraction of the cost (1/11th the rate), and Invocations count per function call.

The practical impact is substantial. If you have an AI call that takes 30 seconds to respond but only uses 300 milliseconds of actual compute, you pay for the 300 milliseconds of CPU time rather than the full 30 seconds. Vercel reports teams seeing up to 85% cost savings with these optimisations. Default execution times have also been increased to 300 seconds, up from the previous 60-90 seconds.

For anyone running API routes, server-side rendering, or backend logic on Vercel, this is worth evaluating against your current costs.

BotID

Bot management is becoming increasingly important as automated threats get more sophisticated. Modern bots execute JavaScript, solve CAPTCHAs, and behave like real users. Traditional defences like header checks and rate limiting are not always enough.

BotID, built in partnership with Kasada, is an invisible CAPTCHA for protecting critical routes. It injects lightweight, obfuscated code that evolves on every page load and resists replay, tampering, and static analysis. No more clicking traffic lights or identifying crosswalks.

The integration is straightforward. Install the package, set up rewrites, mount the client component, and verify requests server-side. It is particularly useful for protecting routes that trigger expensive operations like LLM-powered endpoints, checkouts, logins, and signups. Vercel has indicated they plan to make BotID available even for applications not hosted on their platform.

Vercel Sandbox

If you are building anything that involves running user-generated or AI-generated code, Sandbox is worth knowing about. It provides isolated, ephemeral microVM environments for running untrusted Node.js or Python code safely. Each environment supports execution times up to 45 minutes and can scale to hundreds of concurrent instances.

Vercel built this internally for v0, their AI code generation tool, and it is now available as a standalone SDK that works anywhere, not just on the Vercel platform. It uses the same Active CPU pricing model, so you are only paying for actual compute time.

Microfrontends

For larger teams, the microfrontends announcement is significant. It allows you to split large applications into smaller, independently deployable units. Each team can build, test, and deploy using their own stack while Vercel handles integration and routing.

This is particularly relevant for organisations looking to modernise legacy applications incrementally. Rather than rewriting everything at once, you can migrate piece by piece while maintaining a unified experience for users. Microfrontends entered limited beta at Ship 2025.

AI Gateway and AI SDK

The AI Gateway provides a single endpoint to access models from OpenAI, Anthropic, Google, xAI, and other providers. It handles routing, fallbacks, and provider switching with minimal code changes. Usage-based billing at provider list prices and bring-your-own-key support keep it flexible.

Combined with the AI SDK, which unifies interaction patterns across providers in TypeScript, the developer experience for building AI-powered features has become noticeably smoother. Switching between model providers is a configuration change rather than a code rewrite.

Our Take

Vercel Ship 2025 was heavily AI-focused, which reflects where the industry is heading. But the announcements with the broadest impact are arguably the least AI-specific ones. Rolling Releases, better compute pricing, and bot protection are valuable for any production application, regardless of whether it touches an LLM.

The Active CPU pricing model is particularly interesting. It aligns the cost model with how modern web applications actually work, heavy on I/O and light on sustained CPU usage, and it makes Vercel a more competitive option for running backend logic alongside your frontend.

If you are building on Vercel or considering it for a project, these changes are worth factoring into your decision. And if you want to discuss how any of these announcements might affect your stack, get in touch.